Cold environments can significantly influence how robotic systems operate, perform, and age. For...

The Dream Machine: Mankind's Pursuit to Engineer Stronger Humans

What is Human Augmentation?

Human augmentation (a.k.a. Human 2.0) seeks to enhance and exceed the natural cognitive and physical abilities of people through integrated technology. There are many applications of human augmentation with devices such as cochlear implants to increase sensory perception and orthotics that enhance motion or muscle capability. Some researchers are even working on data-connecting devices that would link the human body to visual/text-based sources of information. A decade or so ago, most people probably would have associated a “power suit” with corporate attire. The notion of a metal “skin” with motors to enhance human strength far beyond normal capability once science fiction, now is “science fact.” In this article, we take a closer look at the evolution of wearable and remote-controlled robots, and how an iterative design process has enabled incremental improvements in the pursuit to create bionic humans.

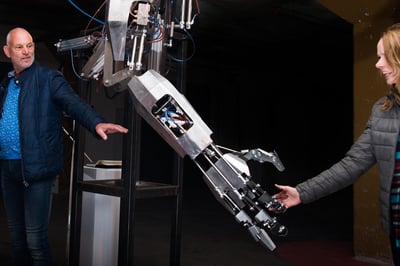

"Freerk Wieringa – Exoskeleton" by Manifestations_ is licensed under CC BY-NC 2.0

"Freerk Wieringa – Exoskeleton" by Manifestations_ is licensed under CC BY-NC 2.0

Evolution of Human Augmentation Technology

While the enabling technology is new, the idea of human augmentation is not. In fact, Leonardo da Vinci made the first drawing of a robot in 1495. More recently, the notion of a body with mechanical muscles appeared in science fiction novels over 150 years ago in Edward Ellis’ 1868 work, Steam Man of the Prairies. In the book, he describes a giant-humanoid steam engine that could tow people at speeds of 60 miles per hour while chasing buffaloes and Indians.

In 1961 (2 years before the debut of Marvel Comic’s Iron Man), the Pentagon invited proposals for wearable robots for a “servo soldier” that was to be something akin to a human tank—with power steering and brakes that would allow soldiers to run faster, lift heavy objects, and be immune from heat, radiation and chemical weapons. By the mid-60s, Neil Mizen of Cornell University prototyped a wearable exoskeleton that the media referred to as the “superman suit” or “man amplifier.” At about the same time, General Electric developed plans for a “pedipulator,” an 18-foot tall device that would carry its operator inside. By the 1980s, Los Alamos National Laboratory scientists had designed the Pitman Suit, a full-bodied exoskeleton for use by Army infantry personnel, but the design was impractical and never went into production. Ten years later, the Army also developed a device akin to the Iron Man, but this project never went into production, either. The early designs were stymied by limited technology and computers that were too small and too slow to respond to a wearer’s commands and movements. Furthermore, the energy supplies were not portable enough, and the electromechanical “muscles,” known as actuators, were too weak and bulky to function like human muscles. However, scientists continued to pursue research and development.

In the 2000s, real advancements began to occur with the Defense Advanced Research Projects Agency (DARPA), the Pentagon’s innovation incubator. DARPA had $75 million in funding for an Iron Man suit that would allow soldiers to carry hundreds of pounds of gear and weapons, and the capability for their operators to carry wounded soldiers on their backs. The device needed to be impervious to gunfire, and be able to jump very high. In 2005, Sarcos (now a part of Raytheon) developed XOS, a prototype close to DARPA’s vision with sensors to detect the contractions of the user’s muscles that could regulate high-pressure hydraulic fluid to the mechanical joints. The joints, in turn, moved cylinders with attached cables to simulate human muscle movement. Concurrently, Berkeley Bionics developed the Human Load Carrier that required less energy and could power an exoskeleton for 20 hours without recharging. A few years later, Cyberdyne debuted the HAL Robot Suit that incorporated sensors to pick up electrical messages sent to the user’s brain instead of relying on the user’s muscle contractions to operate the suit.

"A very spiffy exoskeleton." by gunnsteinlye is licensed under CC BY-NC-SA 2.0

"A very spiffy exoskeleton." by gunnsteinlye is licensed under CC BY-NC-SA 2.0

Robot Design Process

Work on human augmentation has accelerated thanks to the maturation of the robot design process. One of the most commonly practiced process is the Robot Design Framework (RDL). The first step in is to define the problem and identify the objectives, which involves honing in on real needs. To do so, the design and engineering team must approach the project with strategic consideration of customer, environmental and societal input. After clearly identifying the problem, they prioritize the overall objectives, define every foreseeable constraint, and examine several potential scenarios and outcomes. Finally, the team produces a design brief that serves as a working document for the project.

With brief in hand, the second step is research and brainstorming. The team decides what information is required for each phase of the project. Research parameters depend on several factors, for example: efficiency in the design’s practical functions; mechanical details supporting how the robot moves and manipulates other objects; how programming dictates the robot’s thinking and learning capabilities; and its power source. Many technical and economic factors must be addressed prior to resource allocation.

Brainstorming the design often begins with sketching ideas on paper. Sometimes it takes hundreds of attempts before a feasible idea is finally translated into CAD drawings and models. CAD modeling for virtual prototyping and testing reveals any major design flaws prior to building a prototype. The level of teamwork behind research and design is closely tied to the project’s end results; hence, it is important for teams to prioritize communication and practice accountability.

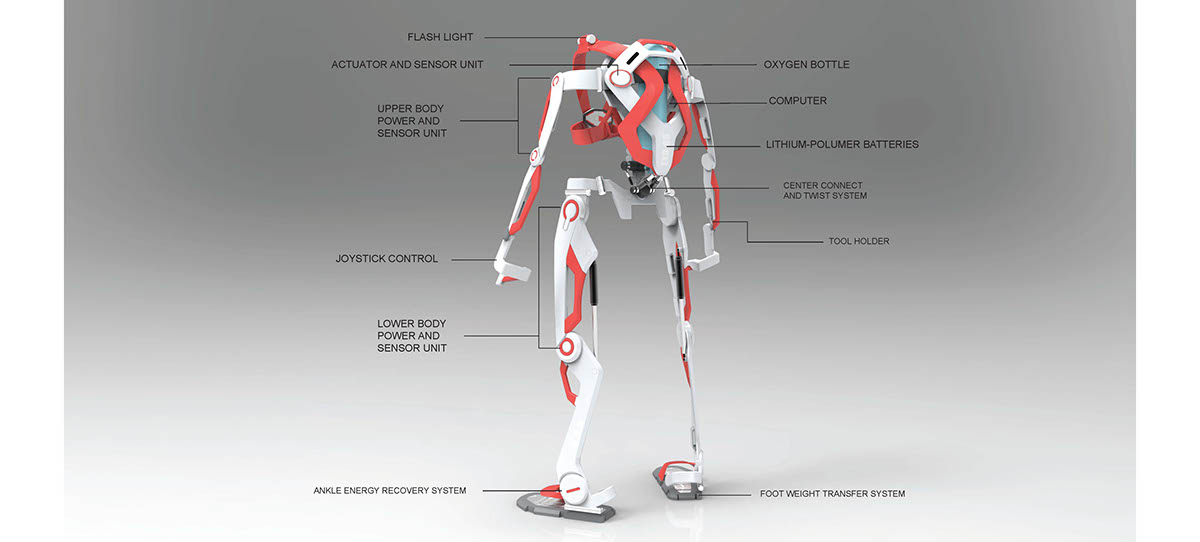

"A.F.A.-Powered Exoskeleton Suit for Firefighter" by Jiazhen (Ken) CHEN is licensed under CC BY-NC-ND 4.0

The third step is building a prototype—or several, if needed. This phase can start with something as simple as a Lego model, but should ideally yield proof-of-concept. 3D printers have become enormously valuable for prototyping designs, not necessarily to prove the design of an entire robot but to test the more complex components within. Before the days of additive production processes (a.k.a. 3D printing), custom-designed components had to be outsourced to specialized manufacturers, both a costly and time-consuming commitment. Now days, with advancements in computing power, robotics, and filament materials, 3D printing is an affordable solution for engineers and designers to test their ideas before moving forward. Rather than relying on virtual testing or mere Lego models to demonstrate designs, engineers are capable of producing realistic parts and pieces in a matter of minutes using off-the-shelf 3D printers. Accurate prototyping reduces the risk of failure by revealing major design flaws or potential mechanical issues, prior to making large investments in labor and material.

Preliminary models may be miniature versions of end product, yet it is unlikely they are made with the same materials. So, even with sophisticated printing technology, there is still no guaranteeing successful end results. However, the team’s analysis of each prototype should ensure that the design will, in fact, solve the problems defined in step one. If not, the process needs to loop back for refinement or redesign. Despite the prototype, the goal of this step is to produce a guide with working measurements, components, and assembly.

The fourth step is building the robot. During the build, materials, cost, processes, limitations, and mechanical functions are truly revealed. Obviously, the more efficient the design process, the better off the building process. In many cases, companies can begin making a return on investment while still in the construction phase by marketing and pre-selling the product.

The fifth step is to test the robot. Testing occurs on two levels. Systems testing at various stages of construction is imperative. If flaws, such a joint failure are revealed, modifications to the plan may be necessary. When building is complete, the entire project needs to be evaluated to prove the application “does the job” as intended. Evaluations should always have a formal process and be recorded, including an outline of strengths and weaknesses to specify where the design succeeded and failed according to the objectives. Evaluations serve as tools for progress and should include observations about how well the design functions, how it looks, safety features, the suitability of materials, a breakdown of costs, and finally how the design can be improved upon. Typically, a successful first-run becomes “the original” of subsequent versions.

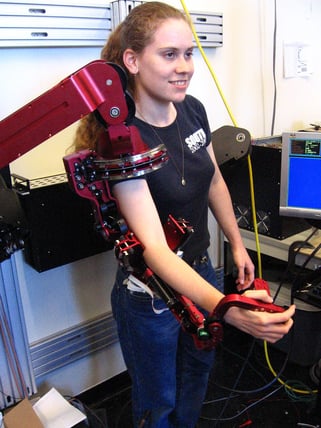

"exo 014" by ewedistrict is licensed under CC BY-SA 2.0

"exo 014" by ewedistrict is licensed under CC BY-SA 2.0

Successive improvements often come from third-party engineers and manufacturers who offer a “fresh set of eyes” to the initial design. For example, the da Vinci Surgical System, a robot-assisted application to enhance surgical precision, is the wonderful result of a design molded to perfection by a coalition engineers and manufacturers. Approved by the FDA in the year 2000, the da Vinci was among the first of its kind to undoubtedly prove machines not only serve as tools for humans, but also as extensions of humans.

The da Vinci Surgical System

Advancements in exoskeleton and robotics research, testing, and experimentation have led to lighter, more flexible, and more functional devices. One of the most significant applications of robot technology is for surgery, and a leader in field is Intuitive’s da Vinci Surgical System. The System enables surgeons to perform minimally invasive surgery with advanced instruments that provide a 3-D high-definition view of the surgical site. Cardiac applications can be used in place of open-heart surgery. Still other applications are suited for colorectal procedures, hysterectomies, open mouth procedures, and thoracic surgery. Prior to the invention of the da Vinci, surgeons had to make large incisions in skin and muscle (open surgery) so that they could directly see and work on the targeted areas. While doctors still perform open surgery, many procedures in the abdomen and digestive tract, for example, can use minimally invasive laparoscopic or robotic-assisted surgery with da Vinci that require only one or a few small incisions to insert surgical equipment and a camera for viewing. Patients experience fewer complications and shorter recovery times on average than from open surgery.

The System has three components: a surgical console, a patient-side cart, and a vision cart. The surgical console is where the surgeon sits and controls the instruments with a 3D view of the patient’s anatomy. The instruments mimic human wrist movement, but with greater precision. Located near the operating table, the side cart holds the instruments the surgeon will use during the procedure. The instruments respond in real time with the user’s hand movements at the console. The vision cart supports the high-definition vision system and is the conduit that enables communication between the system components.

The first generation of the da Vinci (1999) consisted of an ergonomically designed surgeon's console, a patient-side cart with four interactive robotic arms, a high-performance vision system and proprietary wristed instruments. The surgeon's hand movements were scaled, filtered and seamlessly translated through an intuitive interface into precise movements of the instruments. Since then, improvements have included a single port access instrument that allows deep and narrow access to patient tissues through a single incision or natural orifice while maintaining high quality vision, precision, and control, and an articulating robotic catheter that allows a surgeon to navigate the catheter through small airways to reach nodules. Other improvements have come not from the original inventor, Intuitive, but from third parties.

Incremental improvements, such as noise reduction, underscore the importance of broad-based innovation and an iterative design processes. Involving experienced, niche engineers in the process can turn a good solution into a great one.

As human augmentation continues to evolve, we can expect more innovation, especially in next generation robots incorporating artificial intelligence. From da Vinci the man to da Vinci the robot, the journey is both fascinating and incredible.

Feature Image Credit:

"Iron Man Wallpaper" by bharath yes is licensed under CC BY-NC-SA 2.0